Aerial navigation cognitive research

Research on perception, spatial reasoning, environmental interpretation, learning and decision-making in aerial environments.

TELLgen Aerial Intelligence Technologies

TELLgen Aerial Intelligence Technologies develops Physical AI systems for UAVs: drones, fixed-wing aircraft and high-speed aerial platforms — trained AI agents that perceive, interpret, decide and execute learned piloting skills in various environments.

TELLgen Aerial Intelligence Technologies is the aeronautics-focused branch of TELLgen’s applied AI research, development and engineering work. It adapts a general Physical AI approach to aerial robotics: from requirement interpretation and agent design to data acquisition, training, validation and supervised deployment.

Research and engineering domains

Our work spans the complete lifecycle required to create intelligent aerial agents and Physical AI systems operating in dynamic real-world environments. We research and develop technologies, methodologies, and engineering processes for perception, situational awareness, decision-making, physical control, simulation, training, validation, and supervised autonomous operation in aerial environments.

Research on perception, spatial reasoning, environmental interpretation, learning and decision-making in aerial environments.

Design and integration of hardware, electronics and software stacks that enable intelligent aerial agents and prototype platforms.

Collection, structuring, analysis and validation of complete flight session data as vision, telemetry, control and flight mission data for AI training.

Simulation environments, scenario generation and virtual training systems for controlled development, world model construction and validation.

Design, development, training and optimization of autonomous piloting agents, including supervised deployment, operational validation and mission adaptation.

Cognitive integration of aviation laws, AI regulations, operational constraints, ethical considerations, transparency, traceability and certification-oriented methodologies.

Physical AI factory

Our Physical AI research and development process transforms operational requirements into trained aerial agents through structured stages including mission analysis, cognitive adaptation, simulation, neural network design, data acquisition, dataset engineering, AI training, validation, optimization, and supervised deployment in real-world operational conditions.

This process also includes progressive cognitive capability development, beginning from elementary operational behaviors and evolving toward more complex aerial knowledge and reasoning, spatial and environmental awareness, piloting skills, and mission-oriented capabilities. Through training across different drone platforms, operational environments, flight conditions and mission contexts, agents gradually build transferable aerial knowledge domains and evolutive operational competencies. These learned capabilities can then be refined, adapted and transferred to new aerial platforms and operational requirements through specialized transfer learning methodologies and continued training processes.

Mission objectives, operational environments, aerial platforms, constraints, safety conditions and Physical AI capability requirements definition.

Physical embodiment, sensor systems, telemetry integration, control interfaces, cognitive scope and aerial operational role definition.

Creation, acquisition, synchronization and preparation of real and simulated flight, telemetry and operational datasets for training and validation.

Progressive development of perception, situational awareness, interpretation, decision-making and complex piloting capabilities across aerial environments.

Safety evaluation, operational verification, robustness assessment, traceability analysis, supervised autonomous behavior evaluation and takeover capability validation.

Optimization, platform adaptation, transfer learning integration, supervised operation and continuous refinement of aerial operational capabilities.

Aero AI

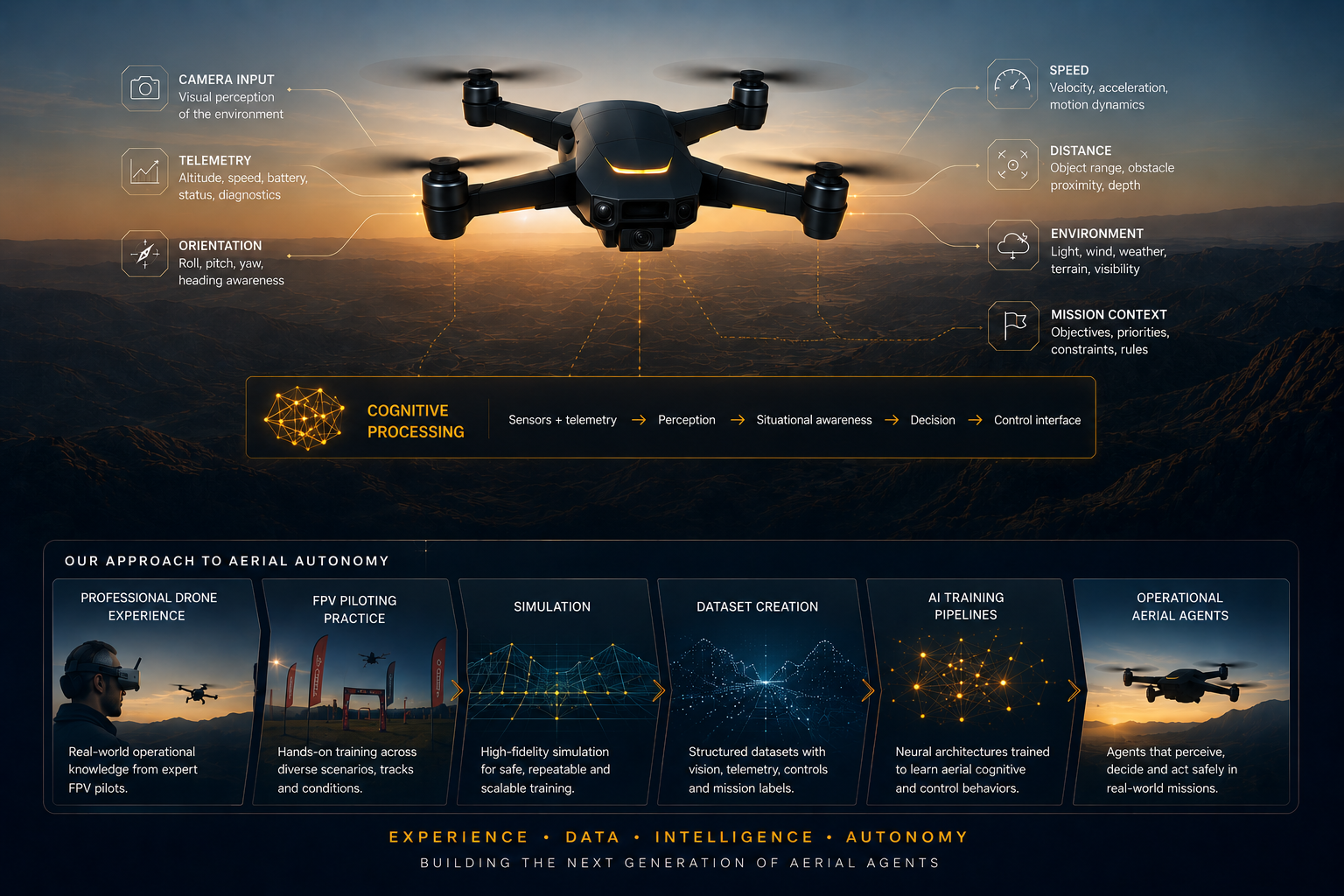

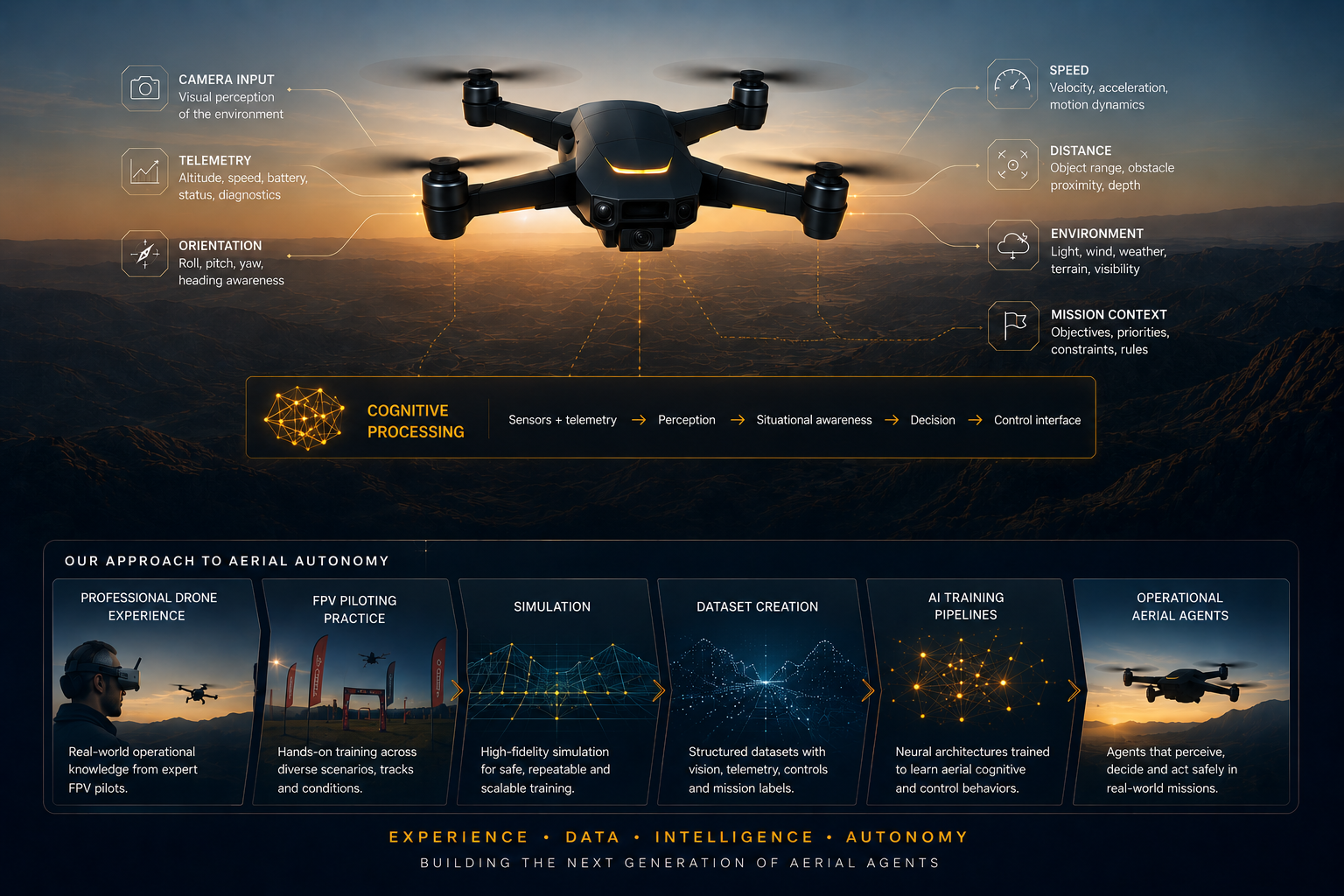

TELLgen studies drone piloting as a cognitive process through professional drone experiences, FPV piloting practices in simulation environments, and real-world flight operations. For an aerial agent, flight is not only motion control. The agent must continuously interpret camera input, telemetry, orientation, speed, distance, environmental changes, and mission context, then convert that understanding into safe and adaptive control behavior.

This perspective guides our dataset creation workflows and AI training pipelines. The result is a structured framework for building agents capable of learning operational aerial competencies. Our agents are also trained on avionics principles, drone piloting rules, safety policies, and regulatory frameworks related to high-risk AI systems and autonomous aerial operations.

Perception · Cognition · Control

The piloting process can be described in a simplified way through functional capability layers. Using onboard cameras, telemetry and environmental sensing, the aerial agent continuously receives operational data streams, similarly to how a human pilot observes flight through FPV goggles and instrumentation. Visual and telemetry information are processed by specialized perception systems responsible for interpreting the surrounding environment, movement, orientation, speed, distance, and spatial dynamics in real time.

These interpreted inputs contribute to the agent’s situational awareness and operational reasoning capabilities, allowing the system to evaluate conditions, maintain orientation, understand mission context, and make continuous piloting decisions. The resulting actions are transmitted through the flight controller to maintain stable and adaptive flight operations across different aerial platforms, operational environments and conditions.

The entire cycle operates continuously and at high speed, depending on the deployed AI platform with the necessary computational infrastructure.

Flagship research line

ADP is TELLgen’s research and development project for AI-based drone piloting intelligence. It studies and demonstrates how a Physical AI agent can learn piloting behavior from vision, telemetry, simulation environments and real flight experience, with functional and validated core capabilities in restricted operational environments.

For more information wisit the ADP Aero webpage. It describes its product line, roadmap and it's deployment and safety model.

Responsible autonomy

Aerial Physical AI must be designed around human authority, regulatory awareness and operational accountability. Our AI agents are trained and systems are developed for supervised autonomous operation, with human oversight, safety constraints, traceability, and immediate intervention capability considered essential elements of responsible deployment in aerial environments.

Agents are designed for supervised operation, defined control boundaries and immediate human takeover in critical phases.

Flight behavior must be logged, auditable and suitable for technical analysis, validation and post-operation review.

Respect for life, property, privacy, environment and mission limits is treated as a trained and validated capability.

Development is oriented toward aviation rules, EU AI governance, certification pathways and high-risk AI obligations.

Applications

Flight intelligence becomes truly valuable when cognitive piloting capabilities can be adapted to different operational environments, missions and human activities. TELLgen researches and develops aerial Physical AI systems for industrial, scientific, operational and advanced piloting applications where autonomous perception, interpretation and control can support real-world tasks.

Collaboration

TELLgen Aerial Intelligence Technologies works with European research institutions, universities, drone and robotics companies, drone testing facilities, industrial partners and public organizations on Physical AI, aerial autonomy, simulation, datasets, aerial security and responsible deployment.